A suite of three tools work together, combining live animation, camera placement and playback capabilities. With a hand tracking interface, the system proposes a tangible approach to the assembly of moving cinematic images.

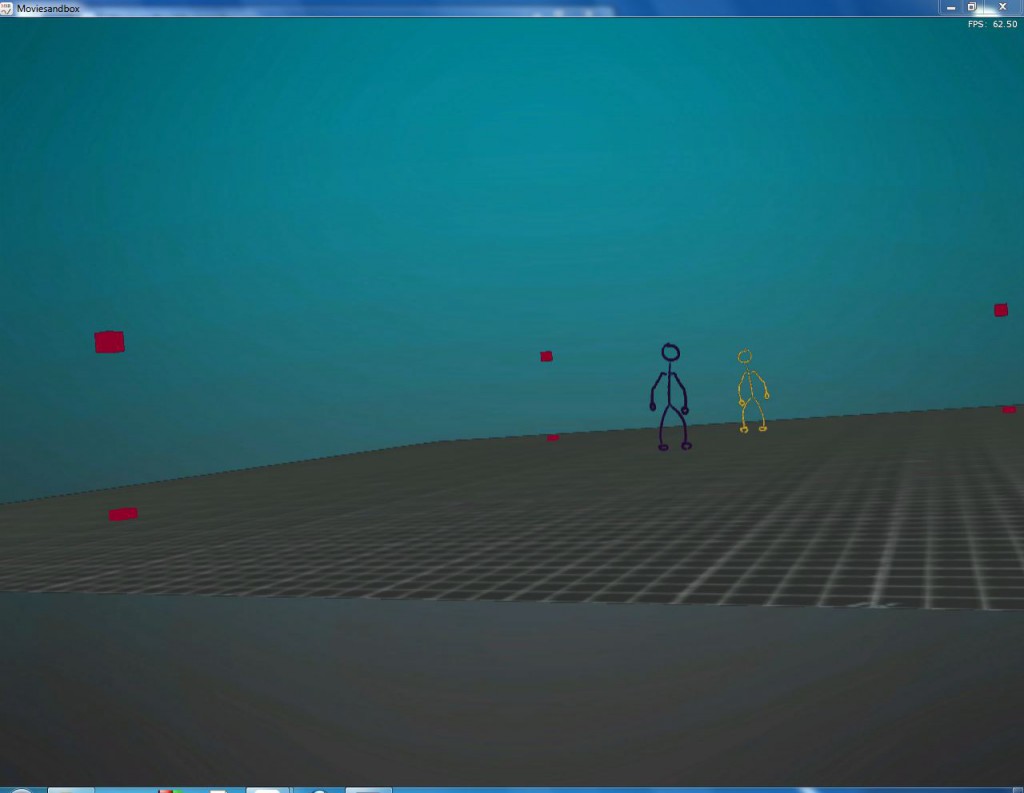

1. Moviesandbox

Moviesandbox is a real-time performance environment using custom hardware and software. It provides the tools and workflows for puppeteering, content creation and performance.

Capabilities: camera viewing, importing and saving scene data, realtime animation

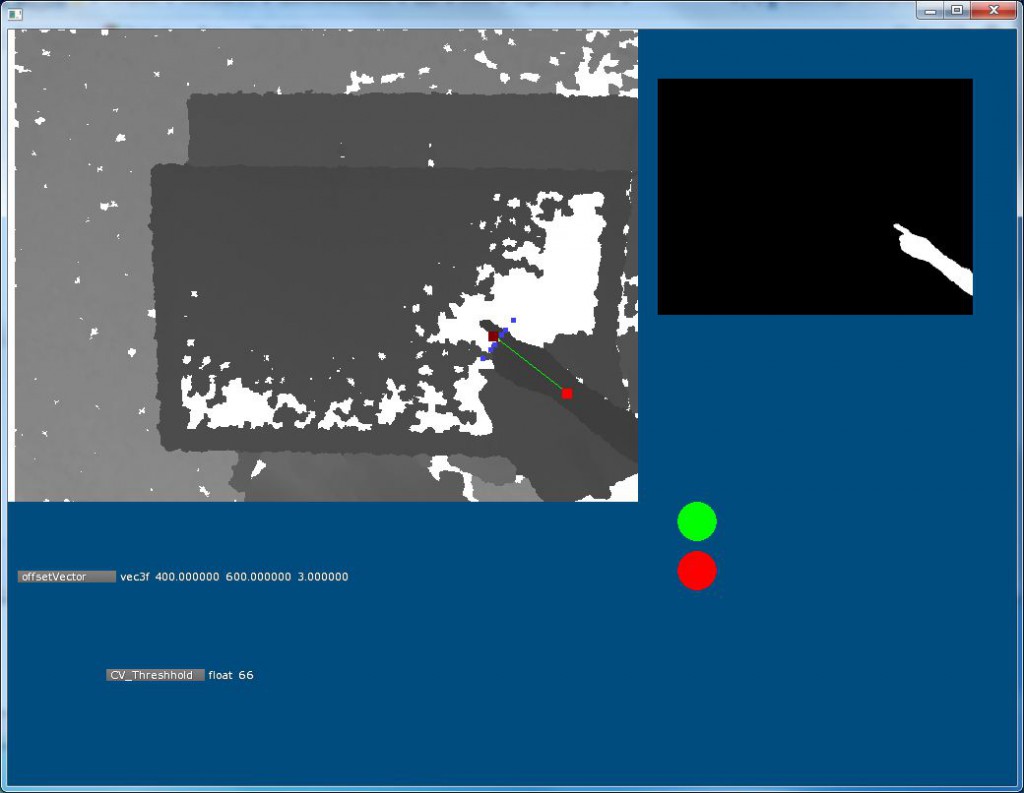

2. Kinect Finger Tracker

The finger tracking program detects the position and rotation of hands outstretched over a staging area and maps these coordinates to control cameras rendered in both the 3D realtime environment (Moviesandbox) and in the arial overview (Graphical User Interface).

Capabilities: tangible tracking, broadcasting information to networked components

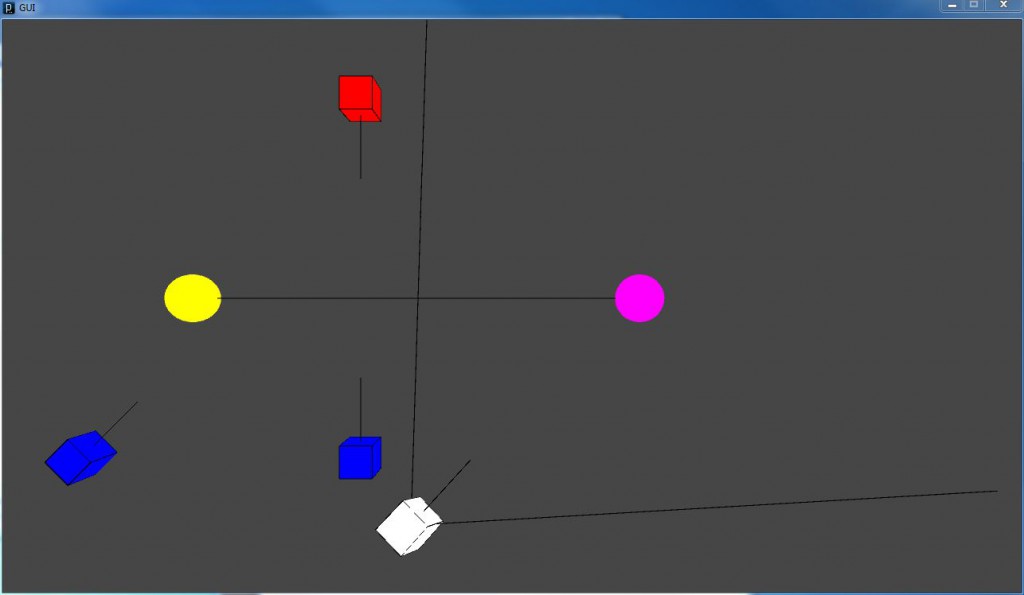

3. Graphical User Interface

This interface complements Moviesandbox to display a parallel representation of assets in a scene. Whereas movie sandbox shows the scene from the camera viewfinder, the GUI displays an arial view of staged cameras. This view visualizes spatial placement to provide a context for decisions about the placement currently selected cameras. Like Moviesandbox, this GUI receives coordinates from the finger tracking program and updates the position of assets in real time. The GUI also displays feedback in real time as the intelligent agent detect temporal and spatial violations of the cinematic canon.

With the implementation of playback controls, the GUI will also display a timeline that indicates which camera has control at any point in time. We are experimenting with different interfaces that allow users to toggle between views for completing focused tasks at a point in time, and for assessing an overview of the constructed scene so far.

Capabilities: Arial viewing, rule detection and feedback

Built using: Processing