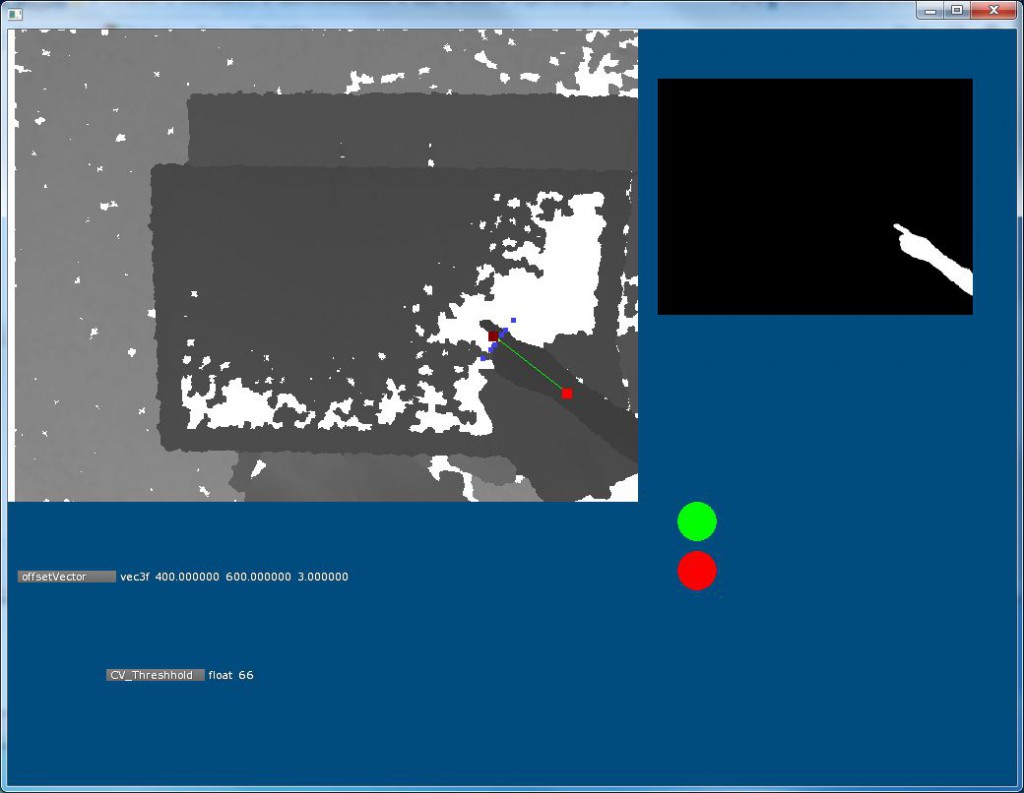

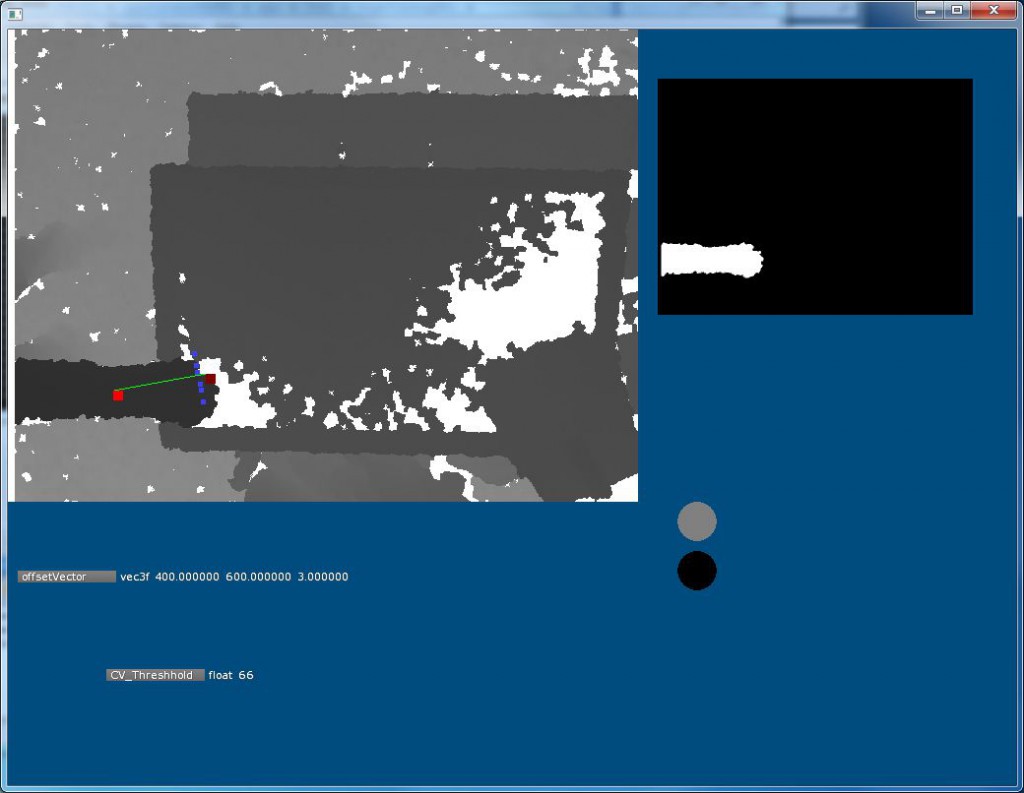

Watching user’s movements in the physical world, the finger tracking application maps the position and rotation of fingers to control the location of cameras placed within the digital filmmaking environment.

This program uses input from the Kinect to detect the position and rotation of an outstretched hand on the staging area. The Kinect is suspended from the ceiling and points downward to capture movement within a six- foot space. These coordinates are broadcast to Moviesandbox and the Graphical User Interface to place cameras in the current scene. Our mission is to create an immersive experience by harnessing people’s physical movement through space and mapping this to the placement of digital assets on screen.

We are expanding the system’s finger tracking capabilities to include additional hand gestures to edit and playback scenes. Users will be able to scrub through a timeline, select and reposition existing cameras angles.

capabilities: tangible tracking, broadcasting information to networked components

Built using: openFrameworks (C++) and Kinect